For our April 2026 meetup, we had Sara Kear—the CMO of Condado Tacos—talk about how analytics and data can be used to help with branding. Yes, Condado Tacos were served. If you missed out, sadly cbusdaw does not do delivery.

Sara pointed out that branding is really about how your brand makes people feel. We might think of “branding” as a package of fonts, logos, and colors: but it’s better thought of as what your audience thinks about you. While this might seem like the softest and most qualitative of data, it can be some of the most powerful to help you figure out what your company is doing right and wrong.

In particular Condado focused on understanding exactly who their best customers were. It turned out that this segment of their customers, called “socializers”, drove 70% of Condado’s repeat business! Listening to customers via socials, surveys, and focus groups and then being able to tie some of that data to actual customer behavior allowed Condado to make better decisions. That’s exactly the kind of data-driven decision making we’re always talking about, yet can frequently be so elusive.

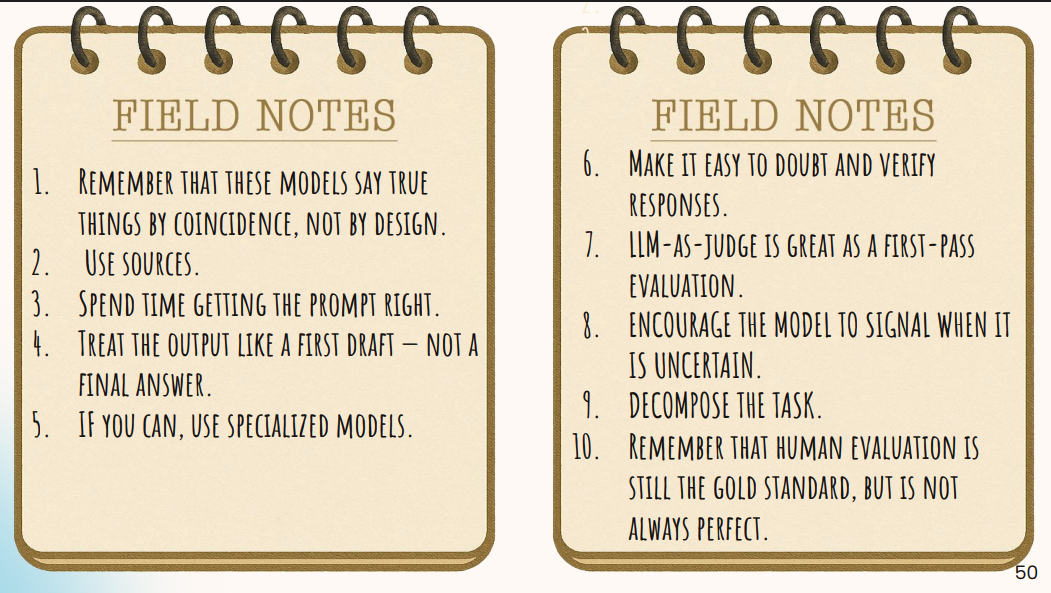

Sara also explained that it wasn’t about getting data perfect: it wasn’t about having that mythical 360-degree view of what customers did, it was about taking a human-centered approach and starting by listening to people.

We were also happy to have donated to the Global Foundation for Peroxisomal Disorders on behalf of Sara for this talk.

Upcoming events we mentioned included:

Wakeup Startup: April 16 and May 21.

Columbus Startup Week, May 5-7 at COhatch Polaris

DataConnect: October 29th-30th

Tim Wilson @ Innovate New Albany’s TIGER Talks, May 15th.

Sara’s Slides

And of course a few pictures!