June 2025 Recap – Reducing LLM Hallucinations

Our June event featured Ash Lewis from Ohio State Linguistics talking about why LLMs give us incorrect information so often, and strategies we can use to reduce this behavior.

While the standard term for this is of course “hallucination”, Ash pointed out that the term “confabulation” more accurately describes what is happening. Hallucination implies that the LLM is incorrectly perceiving something, however what we’re describing is not misperception. It’s AI creating statistically probable, yet incorrect information.

Wikipedia agrees with Ash and described hallucination thusly:

This term draws a loose analogy with human psychology, where hallucination typically involves false percepts. However, there is a key difference: AI hallucination is associated with erroneously constructed responses (confabulation), rather than perceptual experiences.

Of course as any linguist would point out, we don’t get to prescriptively say how language should be used… so we’re pretty much stuck with “hallucination”.

Whatever the term, this happens because everything that an LLM creates is simply what is statistically probable. Output which is also true is coincidental to the process. In other words: it’s guessing about everything, it just happens to be right enough to be very useful.

If you think that this is an issue of the past limited to older models, here’s current example from o4-mini:

During the talk we verified our group’s nerd cred by knowing how this guy found out who his dad was.

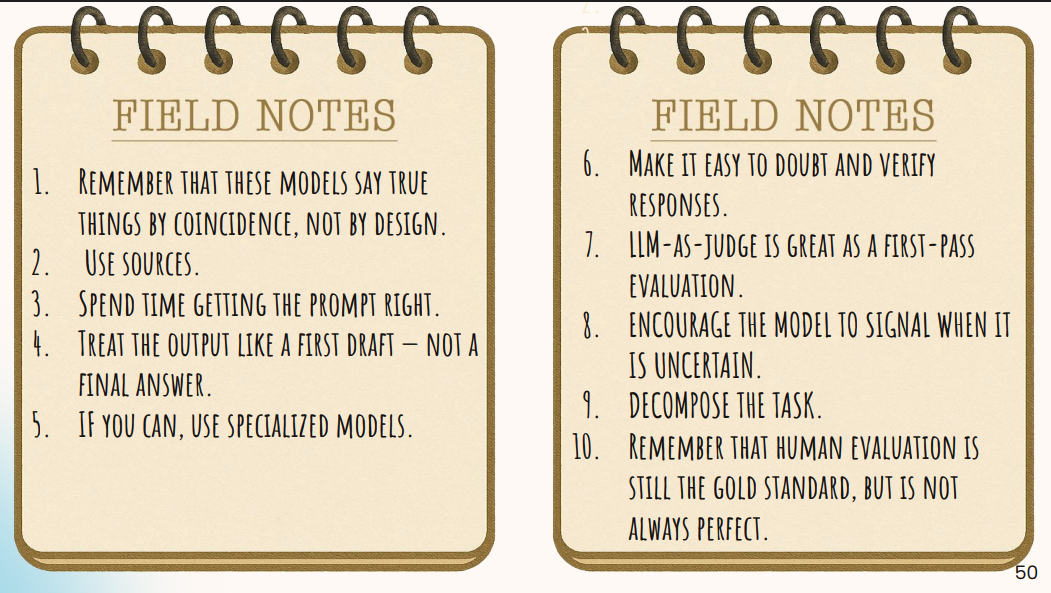

So how do we reduce this problem as much as possible? Here’s Ash’s helpful field guide notes:

Our prompt about our group’s history has set ourselves up for failure by breaking most of Ash’s rules.

We made the following mistakes:

- Not breaking down (decomposing) our ask into small components. We didn’t ask for a more granular question, like the year the group started or a list of previous topics, we asked for a whole history.

- Not encouraging the LLM to check its own work, step through its reasoning, or provide sources, or indicate uncertainty. As soon as we follow-up and ask things like, “what is your source for attendance doubling” it will say it has none.

- Not letting the LLM search the web (this is a form of RAG). The o-series models from OpenAI are pretty good at knowing when they should do this, and likely would have done so in this scenario.

- Not getting more than one response. When asked a second time with the same prompt, it said it didn’t have enough information to give a response.

Ash then dug into details about the work she is doing with COSI (the Columbus science museum) creating an AI agent that can help visitors with questions about the museum. This work attempts to limit hallucinations as well as provide the museum a more affordable and privacy-friendly solution than just sending things to ChatGPT.

She also helpfully has provided us with her slides!

As usual — especially when it’s a talk on AI — the crowd had a lot of great questions!

And a few pictures from the event. All totally real. Really. Maybe?